At Haus, we often think of incrementality education in terms of 101s, 201s, 301s, and so on. For instance, our Incrementality School series is a 101-level introduction to incrementality — while our deep dive into synthetic controls is unquestionably 301-level.

Why do we do this? Because incrementality knowledge tends to stack. You can start high-level and think of incrementality testing as an experiment-driven approach that measures causality rather than correlation. But once you understand that, you can dive into the nuances and nerd out with some real experiments.

Today, we’ll run the gamut. This guide will start simple with some definitions and examples so that we’re all on the same page about incrementality testing. But then we want to get a bit more advanced — so we’ll dive into incrementality’s advantages over other measurement approaches, as well as some of the more granular tests you can run. Then we’ll actually get into the weeds, walking you through how a hypothesis turns into a test — which then turns into actionable insights.

We’re kind of big on analogies here. So think of this guide as a zoom lens on a fancy camera. We’ll start with the broader concepts around incrementality. Then, slowly, we’ll zoom in and explore the nitty-gritty. By the end, you should know incrementality in your bones.

Building a culture of experimentation doesn’t happen overnight. But the first step on the journey is education. Just like you wouldn’t get behind the wheel without first learning the rules of the road (hopefully), you can’t get the most out of experimenting until you’ve brushed up on the basics. So let’s dive in — and get you on the road to serious business outcomes.

What is marketing incrementality?

Marketing incrementality uses counterfactuals to understand the true, causal impact of a marketing intervention. And if that definition sounds a bit Stat 201, let’s break it down even more simply: Marketing incrementality measures if your marketing is driving business impact — and it does this by testing what would happen if you took away that marketing intervention.

For instance, maybe you’re wondering if your Meta campaigns are driving customers to convert. An incrementality test answers this question by withholding Meta ads from a portion of your audience. This portion is known as your holdout group. If sales are much lower among your holdout group, it’s likely Meta is incremental for your business. After all, sales dip without them.

It’s a bit like randomized control experiments in drug discovery. A pharmaceutical company will set up a test with two groups: One group receives the drug in question (i.e. treatment group), while the other group receives a placebo (i.e. holdout group). If medical outcomes are much better among the group receiving the drug, it’s likely that the drug is effective.

Running a holdout test is easier said than done. For an effective test that yields reliable insights, you need to take a statistically rigorous approach to sampling. Your treatment group and control group must be characteristically similar. Advanced methods like synthetic controls help you build highly similar groups — leading to results that are less error-prone and more precise.

Incrementality testing sets itself apart from other marketing measurement methods (e.g. MTA, traditional MMM) by measuring causality instead of correlation. For instance, if you spend more on Meta and sales increase, attributional models will say that Meta is working. But you don’t know if Meta is actually causing those sales.

Okay. Still wrapping your head around all that? We get it. So let’s zoom in a bit further and unpack a real, nuts-and-bolts example of incrementality in action.

Example: Measuring incremental purchases on Meta

Let’s say you’re a Growth Marketing Lead at an outdoor gear brand called Trek. As the name suggests, Trek sells sleek, minimal gear for the modern outdoor explorer. And yes, the gear looks great. Customers are always showing it off on Instagram.

So, being the wise growth leader you are, you figure your customers are… probably on Instagram. Now that’s the easy part — the harder part is figuring out if these ads, served through Meta ASC, are actually driving incremental purchases. If you were to take away these Meta campaigns, would sales shrivel?

To figure this out, you design an incrementality test with two groups: One group sees Meta ads (treatment group), and the other group does not (control group). Simple enough. You track your primary KPI (new sales) in both of these groups over a two-week period. Then you compare the difference in sales between the two groups. You find that the group that sees Meta ads has many more conversions than the group that sees no Meta ads.

Platform metrics had shown that Meta ads were performing well — but you actually find that the platforms were underreporting just how effective these ads were for Trek. You realize you can actually up your Meta ads spend and see significant sales gains.

This is a fairly simple example. You might find it useful to add a post-treatment window to your test, which measures delayed effects of your advertising. Such insights can help you better understand the impact of your marketing and drill down on the best timing for campaigns.

Even more nuance comes into play if you’re an omnichannel brand. For instance, maybe Trek customers find your gear on Instagram, go to your official site to learn more, but ultimately convert on Amazon. Choosing a measurement partner who tracks these halo effects can help you better understand the incremental impact of your marketing efforts across a variety of digital and physical sales channels.

Why should you measure incrementality?

More and more companies are having that “lightbulb moment” where suddenly incrementality emerges as the best choice for their business. Here are a few reasons why more marketing teams are measuring incrementality:

- More accurate: Ad platforms tend to inflate their impact — and they won’t hesitate to take credit for a purchase that would have happened anyway. Incrementality tests separate conversions that would have happened anyway from incremental conversions — helping teams more accurately assign credit to their different channels.

- Better budget allocation: The Trek C-suite gives you a clear directive: Profits, please – oh, and your budget’s getting smaller, too. Testing can help you identify inefficient channels and tactics so that you can reallocate budgets strategically.

- Stronger decision-making: In the Wild West of growth marketing, knowledge is power. Knowing what actually works and what doesn’t can help you make decisions based on proven performance rather than mere assumptions.

- Persuasive to key stakeholders: For teams that need to justify their decisions to executive stakeholders (see: all teams), incrementality test results are useful supportive evidence for major decisions and budgetary moves.

- Cross-channel insights: If your ad budget is in the tens of millions, you may be advertising across multiple channels and selling in multiple places. Fortunately, incrementality provides a unified measurement approach that works across digital and offline media.

- Future-proofing against data loss: As new and ongoing privacy regulations affect how and where marketers can collect user data, incrementality is a privacy-durable way to reduce your reliance on third-party cookies and platform-reported attribution.

- Competitive advantage: As more brands adopt incrementality solutions to optimize their marketing mix for maximum ROI, the downside of passing on incrementality only grows steeper.

What can you incrementality test?

An advantage of incrementality testing is its flexibility. Whether you’re revamping your marketing mix with new channels or simply looking to make your business-as-usual marketing mix more cost-effective, incrementality testing offers plenty of options.

Test macro-level strategy or granular-level tactics

Maybe you’re considering a more macro change in strategy. For instance, you might have decided to launch a new channel. But you want to make sure you’re investing in this channel at an efficient level. So maybe you launch a 2-cell geo-holdout incrementality test to figure out how incremental this new channel is for your business.

The results show the channel is incremental for your business. But you’d still like to ensure you’re spending efficiently — so you decide to get more granular with some channel-level tests that measure different campaign tactics. You decide to create a 3-cell test:

- One part of your audience sees campaigns optimized to purchase

- One sees campaigns optimized for add-to-cart

- And one is your holdout group — they see neither

That’s what baby brand Lalo did when they partnered with Haus on a series of upper-funnel tests on Meta and TikTok. They found that they were missing out on a more upper-funnel audience, so they shifted their strategy and added more upper-funnel campaigns into their evergreen marketing strategy on TikTok and Meta. The result? Less advertising to already high-intent customers, and more advertising to new and engaged audiences.

More key questions incrementality helps answer

When an enterprise is spending millions in ad spend across lots of channels, it's natural to have questions about marketing performance. Luckily, testing can help you answer:

- Whether to include or exclude branded search terms in PMax campaigns

- When your ad spend hits the point of diminishing returns

- Whether customers convert on your site, on Amazon, or at retail locations

- What the right media mix optimization is for incremental growth

- How incremental YouTube ads are for your brand

- Which paid marketing efforts are most incremental during peak seasonal periods

Why measure campaigns with incrementality?

Marketing teams have plenty of measurement options these days — so why incrementality testing? How does it beat other tools? The advantages can mostly be summed up in one word: Causation. Incrementality measures causation while other methods focus on correlation.

Let’s explain what that means and how that happens — using a detailed, hopefully amusing example.

The power of establishing single-variable causation

Good news: Your bosses at Trek loved your experiments around Meta campaigns, so you’ve just been promoted to Head of Growth. Congrats! With this impressive new title (and sweet pay bump), you splurge a bit on some new clothes, which you’ll wear during the two days a week you spend at the Trek offices.

You’ve noticed that each time you wear your fancy new hat, you get lots of (friendly, workplace-appropriate) compliments from your coworkers. You’ve noted a correlation between “wearing the hat” and “getting compliments.” Nice. Hat it is.

But when you tell this insight to the Head of Analytics at Trek, she scoffs. “You have so many confounding variables!” She advises you to randomly select certain days as “no-hat days,” and some days as “hat days.” Crucially, she says that aside from the hat, you must wear the same exact outfit each day — and you must talk to the exact same coworkers each time you come to the office.

You really like getting compliments, so you go along with this. Over the course of the month, you find you get 0.46 compliments on hat days versus 0.32 compliments on no-hat days. While your friend in Analytics says you might need to run a longer test and isolate even more variables, she confirms (with slight uncertainty) that the hat is an incremental driver of compliments.

Now let’s tie this example back to, you know, marketing. Think of your channels as parts of your outfit. There’s your hat (Meta). Your cool new sneakers (TikTok). Your fancy new T-shirt (YouTube). Which channel is leading to compliments sales? Just like taking off your hat, you can isolate these channels one by one to better understand how they all work to help you hit your goals.

The problem with other measurement approaches? They don’t isolate a single variable. Without causal relationships at the core, you lack clear insight into which tactics are leading to which outcomes — sending you down the confusing path of multicollinearity. (Sorry. 301-level word.)

Disadvantages of multi-touch attribution (MTA)

There are plenty of disadvantages to multi-touch attribution (MTA). Here are a few that stand out when compared to incrementality testing:

- MTA relies on cookies and pixel tracking: This data is less trustworthy and reliable. Incrementality testing doesn’t rely on pixels, PII, or user-level information, which makes it durable in the face of ongoing privacy regulations.

- Not enough love for the upper-funnel: Because many platform algorithms serve ads to high-intent customers that might have converted anyway, attributional methods like MTA over-index on lower-funnel conversions and underrate the impact of upper-funnel campaigns.

- Little insight into seasonality: MTA gives very little information about the impact of seasonality on your marketing, while incrementality testing can factor in seasonality as you pick your treatment and control groups.

Disadvantages of traditional marketing mix modeling (MMM)

The big issue with traditional MMM is trust. Because these models rely on aggregate daily KPI data, their conclusions are a bit flimsier. Hence, respondents have a hard time trusting the results. But that’s not all:

- Lack of transparency: MMMs are opaque about their methods. Unless you’re getting really accurate documentation about what’s happening “under the hood,” it’s hard to know where a given model’s recommendations are coming from.

- Outdated data: Considering models are developed using 2-3 years of historical data, while marketing strategy and performance often change on a seasonal, monthly, and even weekly basis.

- Bad data, bad model: The insights returned from an MMM are fully dependent on the data available to represent the marketing channel or business factor

- Multicollinearity: When a marketing channel is highly correlated to sales or another channel, it creates issues with bias (e.g. the reported lift is wrong) and precision (e.g. wider confidence intervals).

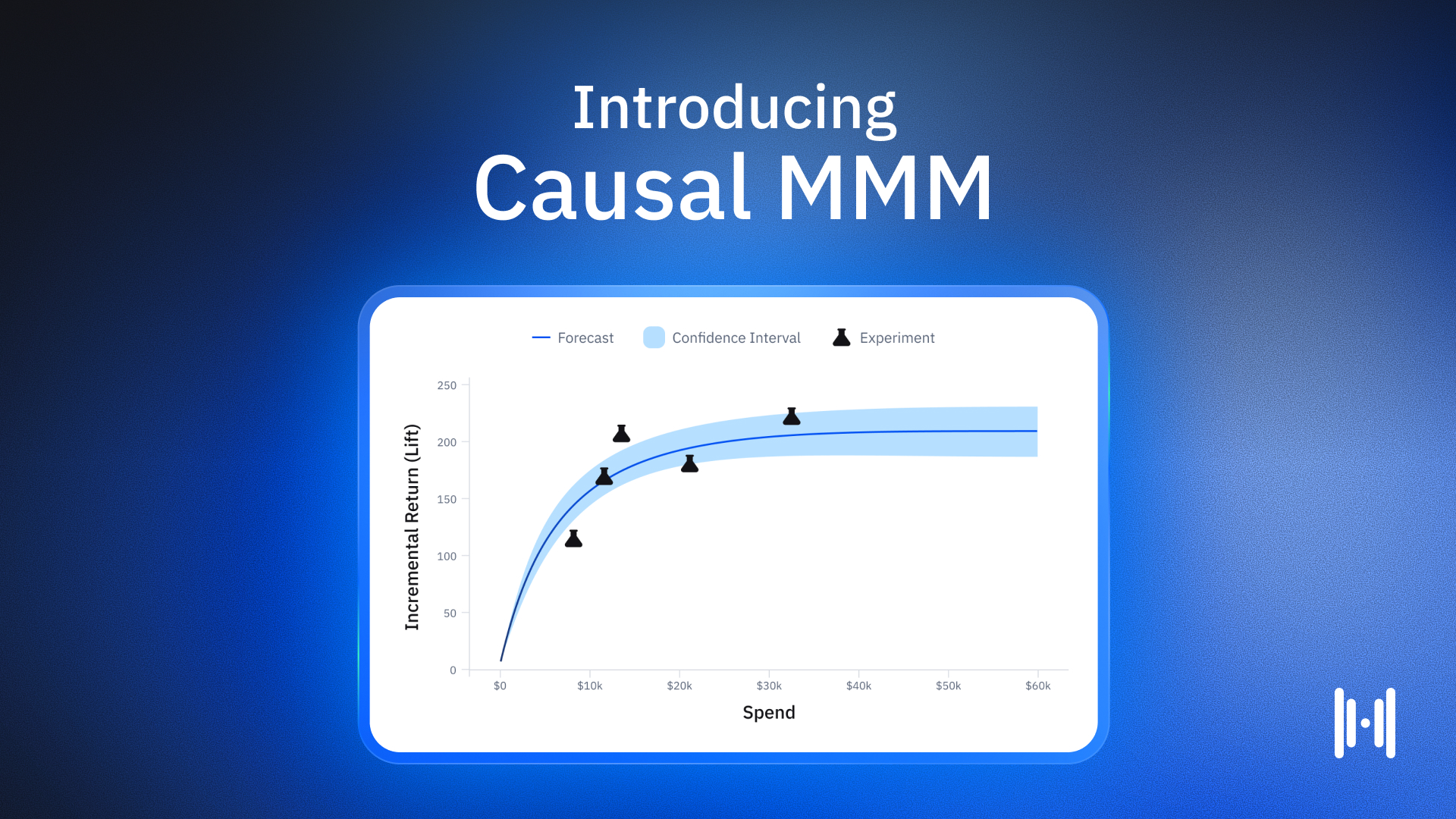

Of course, MMMs have the potential to help marketing teams, assuming they’re rooted in experiments. That’s why we’re building Causal MMM, due later this year.

When Should You Invest in Incrementality Testing?

While we’re clearly pretty bullish on putting incrementality testing at the center of your marketing stack, we also understand that it’s a big investment. Yes, the ROI can be significant — but you need to have the right organization for it.

You should probably start testing if...

- You spend on more than 1-2 marketing channels: More marketing channels simply means your business has more variables impacting business outcomes — which means you need a way to isolate these variables and understand their efficacy.

- You spend millions each month on marketing: Testing allows you to optimize high-budget campaigns efficiently. For instance, incrementality testing can help you determine the optimal spend level for each channel, putting each ad dollar to work.

- Your business is omnichannel: Face it, customers don’t always take the short route to purchase. Maybe they see an ad on Instagram, check out your site for more info, and then make their purchase on Amazon or in-store. Incrementality testing can help you unpack this winding customer journey.

- You’ve hit a growth plateau: Maybe platform algorithms are serving the same ads to the same high-intent customers — and growth is flat. Incrementality can help you shake things up, testing new channels and campaign types that can help you reach new buyers.

How to Run an Incrementality Experiment

Breaking news: Humans are weird. They’re unpredictable. Irrational. Emotional. Inconsistent. Indecisive. We’re supposed to believe you can use math to understand something as unpredictable and abstract as advertisements’ impact on consumer behavior?

Well, no one said incrementality testing was easy. In fact, testing effectively requires cross-disciplinary expertise. Data scientists. Applied mathematicians. Econometricians. Not to mention a team of growth experts who can help you actually act on those test results.

To give you a high-level overview of incrementality testing, here are the three main steps:

1. Design the Experiment

First, ask yourself what you want to test. If you’re stumped, assess your broader business goals. For instance, maybe growth is the goal. Your team wonders if more upper-funnel campaigns on your primary channel (Meta) might help you reach a new audience.

In this case, you might want to design two tests. The first is a 2-cell test that measures the incrementality of your existing lower-funnel campaigns. This would include a treatment group (who receive a lower-funnel Meta campaign) and a holdout group (who receive no Meta campaigns). Then you would do the same for your new upper-funnel campaign. Then, you would compare the incrementality of each campaign type and reallocate spend accordingly.

Key design considerations

Your testing provider will keep the following in mind as they design your experiment:

- Controls – Your holdout group and treatment group should be as similar as possible. Look for a provider who uses synthetic controls to accomplish this.

- Variable Selection – You want to only focus on one variable at a time. Your variables can, uh, vary. They might be a certain tactic, campaign type, or spend level.

- Primary KPI – Before any test, you’ll need to choose a primary KPI. (Testing providers like Haus also allow secondary KPIs, too.)

- Experiment Runtime – One of the biggest decisions is campaign runtime. While a longer test improves the accuracy of results, it’s also more expensive. Decide how long you’re comfortable “turning ads off” for a certain audience segment.

2. Launch the Test

Once you’ve successfully picked variables and KPIs, it’s time to test. Work with your provider to choose the best testing methods for your channel of interest. For instance, if you’re working with digital ads, you’ll want to use geo-location segmentation, which refers to holding out ads from a certain region. If you’re testing physical media, you’ll want to partner with an agency to execute.

Ideally, you’ll want to work with a provider that accounts for seasonality. And you want a provider that will test nationally instead of just testing certain regions. If your marketing intervention isn’t relevant to certain regions (e.g. FanDuel advertising in states where sports gambling isn’t legal), ensure your testing provider is able to avoid irrelevant regions (known as “geo-fencing”).

3. Analyze Your Results

Analyze your KPIs in your treatment group versus your holdout group. The difference between these groups represents incremental lift.

Then, use those learnings. Maybe your upper-funnel holdout test proves that these campaigns are actually incremental for your business. It turns out you can pull some spend from your lower-funnel campaigns. You find this improves overall customer acquisition cost (CAC) and marketing efficiency ratio (MER).

The reward for a good test? More tests. Maybe you’re so jazzed about these upper-funnel Meta campaigns, that you’re now convinced upper-funnel campaigns might work on YouTube, too. Wouldn’t you know it — you’re well on your way to a culture of experimentation.

Measure Incrementality with Haus

As we’ve discussed, incrementality helps you get a more honest view of your marketing. Instead of just following correlations, testing helps you determine how a change in tactics causes a change in business outcomes.

Haus was designed from the beginning to automate this process. Instead of being a testing service with a software product tacked on, Haus started as a software platform. That means every chance to automate steps was taken. Setup is seamless, which means you’ll have incrementality insights in weeks, not months. And analysis is made simple with easy-to-read insights and graphs that even the most data-phobic stakeholders will quickly understand.

You’ll also be working with a trusted, transparent group of experts. Haus’ team of Measurement Strategists has worked in analytics and growth at major ad platforms, sizable agencies, and some of the most recognizable DTC brands around. They won’t just throw you on the platform and hope for the best. Instead, they’re there at the beginning, middle, and end of the test process, aligning the testing roadmap with your broader company-building goals.

Haus goes the extra mile with science to offer added precision. Stratified random sampling ensures your treatment and control groups are balanced, eliminating selection bias. Additionally, synthetic controls help us achieve the “gold standard in causal inference,” using frontier methodologies to maximize experiment accuracy and precision.

This combination of automation, support, and science has set Haus ahead of the curve — and it explains why more and more brands are turning to Haus to optimize their campaign spend and put their ad dollars to work.

Frequently Asked Questions

What is marketing incrementality testing?

Marketing incrementality testing is an experiment-driven approach that uses counterfactuals to measure the true causal impact of marketing interventions. By withholding ads from a holdout group, brands can determine if their paid media is driving sales that would not have happened otherwise.

How does incrementality testing differ from attribution?

Unlike traditional multi-touch attribution (MTA) which relies on correlation and pixel tracking, incrementality testing focuses on causation. It isolates variables to see how specific tactics change business outcomes, providing a more privacy-durable and accurate measurement of ROI.

When should a brand invest in incrementality testing?

Brands should consider investing when they spend on multiple channels, hit a growth plateau, or operate as an omnichannel business. It is particularly effective for media mix optimization and understanding complex customer journeys across digital and physical channels.

Can you test granular tactics with incrementality?

Yes. Beyond macro strategy, incrementality can test granular tactics like branded search terms in PMax, different campaign optimizations, and the impact of specific creative channels like YouTube ads.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.png)

.avif)

.png)

.avif)