How Haus Scales Causal Marketing Measurement Without Human Bias

How automating hundreds of causal models a week – and grading them with a blind exam – yields better outcomes for businesses.

Feb 24, 2026

When I joined Amazon in late 2018, I was parachuted into the middle of the central marketing measurement organization. There was a large internal multi-touch attribution model, marketing data cloud, an array of scientists, and a bit of regional testing code that was on fire with disappointed stakeholders. A scientist who had been working on regional testing before I got there left for a new team a couple months earlier. He had been working 60-hour weeks just to deliver two analyses a month.

This scientist cared deeply about the work. He spent those hours manually tuning models, dropping outlier data, and staring at charts to ensure the results "looked right." When I reached out to him to ask him some questions he gave me a warning I’ve never forgotten:

"This can’t be productionized; it’s a dead end for your career."

His argument was that high-quality causal marketing measurement was an artisan craft. He believed it required human intuition to navigate the noise. This stuff was difficult; he thought that if you took the human out of the loop, the quality would collapse. And if you can’t automate it, you can’t productionize it. If you can’t productionize it, it’s a dead end at a tech company.

His career advice was wrong. But he was right about the difficulty.

The problem with "artisanal" data analysis

In marketing measurement, the signal-to-noise ratio is notoriously low. Just a few percentage points of lift in conversion can be enough to justify large marketing campaigns.

When a human analyst processes this data, they face dozens of small choices: Should they aggregate the data by day or by week? Should they exclude outliers dates like Black Friday? Should they include Los Angeles and New York?

These choices have limited objective principles to guide them and that can lead to dangerous situations.

Here is a common scenario: An analyst runs an experiment. The result comes back showing zero or even negative lift. That feels "wrong" to them. They know the marketing campaign was good. So, they decide to re-run the analysis, but this time they aggregate the data by week instead of by day. Suddenly, the result shows a positive lift. They accept that result because it aligns with their intuition (which may or may not be correct), and they discard the first one.

They aren't trying to lie. They are just looking for a result that feels comfortable. But if you make enough of these small, manual, not-guided-by-objectivity decisions, the data stops informing you of the truth. You are instead manufacturing the result you wanted to see, even if you do your absolute best to make unbiased, rational decisions along the way.

Over time, this compounds, and a business may find itself making massive marketing investment decisions that lead them down the wrong path, ultimately wasting millions on marketing that isn’t driving incremental business outcomes.

To automate marketing measurement at Haus, we needed a referee. We needed a system that could evaluate the quality of a model objectively, without knowing or caring what the experimental result was.

And this is exactly what led to the development of the Haus Model Reliability Index.

The Model Reliability Index (MRI)

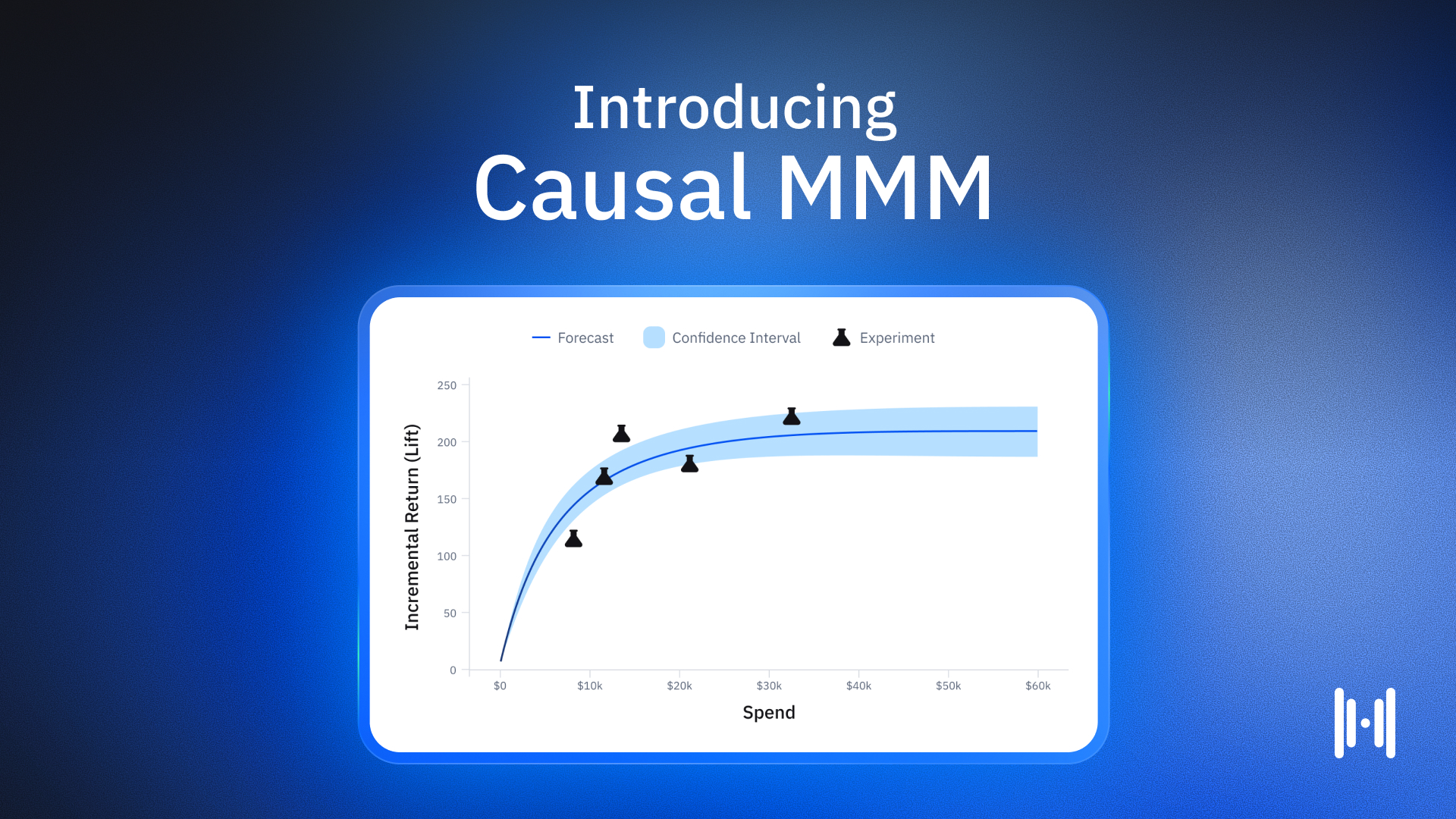

The Science team at Haus developed the Model Reliability Index (MRI) to answer a single question: Can this model recover the truth?

We don't judge a model based on how well it fits historical data — that’s easy. We judge it by how well it predicts the unknown. To do this, we run thousands of placebo tests (or A/A tests). We feed the model a dataset where we know for a fact that nothing happened — there was no experiment; both treatment and control had the same marketing activity. If the model looks at that data and reports a 10% lift, we know it is wrong.

By repeating this process across thousands of simulations we generate a score. This score quantifies the bias and variance of the estimator. It tells us exactly how trustworthy that specific model is for that specific type of data.

MRI enables Haus to do what the "artisan" data scientist said was impossible: productionize causal marketing measurement. This is why open-source models alone are not sufficient for building a production system that earns stakeholder trust. They have no governing metric like MRI that guides their use and instead have many knobs and levers that artisans can endlessly fiddle with.

Specifically, MRI supports two things: stress-testing the system and automating edge case handling.

1. The Gauntlet: Stress-testing the whole system

First, Haus uses MRI calculations to govern all of our model deployment decisions. We call this The Gauntlet.

The Gauntlet is a registry of real, anonymized customer datasets. It includes the "easy" data, but it also includes the nightmares: datasets with jagged seasonality, massive outliers, and structural breaks.

Before we deploy any upgrade to our core estimation engine, the new code must run The Gauntlet. We check if the new method improves the MRI score across that broad swath of data compared to a textbook baseline (Difference-in-Differences).

If the new model produces a worse MRI score (meaning it’s less reliable), we kill it. It doesn't matter if it produces higher lifts that would make Haus customers happier. If the math isn't reliable, it doesn't ship.

2. Stone Turner: Automating the edge cases

Second, we use MRI to automate the specific configuration for every single client analysis. We call this "Stone Turner."

Every business’s data is unique. One retailer might have huge spikes on weekends, while a B2B client might be dead silent on Sundays. A human analyst would traditionally look at this and manually tweak the settings (e.g., aggregating a B2B client’s data to weekly to smooth out the noise).

Stone Turner automates this by brute force. For every analysis, the system runs a grid of possible configurations. It calculates the MRI for every single combination on that specific dataset. Then, it selects the winner. Crucially, it picks the winner based on the MRI score, not the experiment result.

If the most reliable configuration – i.e., the best MRI – says the marketing campaign was ineffective, that is the number we report. We don't shop around for a better lift estimate. This allows us to deliver "uncomfortable signals" with confidence and integrity. We can look a CMO in the eye and say, "We know this number is negative, but we also know the math that produced it is reliable. Here’s what we recommend from here.”

Automating the truth

Haus has moved from that artisanal era of “two analyses a month” from my past to estimating hundreds of causal models a week, almost entirely without human intervention.

We’ve found that the only way to scale the truth is, frankly, to stop touching it. There are times and places for a human touch. But by forcing our models to pass a blind, objective exam – the MRI – before they are allowed to evaluate your marketing, we removed the "researcher degrees of freedom" that plague our industry and have productionized high-quality causal measurement.

Sure, the work is difficult. But it’s hardly a dead-end.

Subscribe to our newsletter

Article Tags

Article Authors

Joe Wyer is the Chief Scientist at Haus. Prior to Haus, he was a Senior Economist at Amazon, where he launched the Decision Science team, whose mandate was to build frameworks for scalable, consistent, high-quality decision-making. He holds a PhD in Economics from the University of Oregon.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.png)

.avif)

.png)

.avif)