As a marketing leader, you’re contending with lofty pipeline goals, tight deadlines, and financial stakeholders to appease. Patience might be a virtue, but for the fast-moving marketer, it can feel like an unrealistic luxury.

That’s why teams are often so curious about test duration when they get into incrementality testing. Holding out audiences comes with opportunity costs, so the pressure to wrap up an experiment and “get back to business” is intense.

Haus Solutions Consultant Tarek Benchouia has seen this tension in action, but he advises teams to stay the course.

“We as marketers move very quickly,” says Tarek. “To hold tight for six weeks and let an experiment run is a very unnatural feeling. But the quantity of insight you get from a six‑week test really outweighs any of the feelings and nerves around being locked into an experiment.”

That’s why Tarek says the first thing teams should do before running an incrementality test is ask themselves this question. How long do I need to run in order to get a confidence band that’s narrow enough for the test result to be sufficiently powered and statistically significant?

In other words, how long do you need to run a test in order to trust the results?

As you go to answer that question, consider these four factors:

- Your brand’s consideration cycle: Big, infrequent purchases (e.g. furniture) require longer studies; impulse purchases (e.g. ecommerce, CPG) are better suited for shorter tests.

- Your other measurement sources: Reference PPS data, MMM ad stock curves, and historical test results to pinpoint time-to-impact. (Multi-touch attribution is not a reliable source here. Once you have their click ID, they are later in their buying journey.)

- Funnel dynamics and channel behavior: Results materialize differently depending on where your ad dollars are being allocated and the objective you set.

- Ideal test power: Smaller holdouts require more time. If you only hold back a small percentage of your audience effectively, you need a longer runtime to reach statistical significance.

We’ll dive deeper into these different factors in the sections below, then offer some tactical advice on test duration baselines by channel.

The mathematics of patience: What is statistical power?

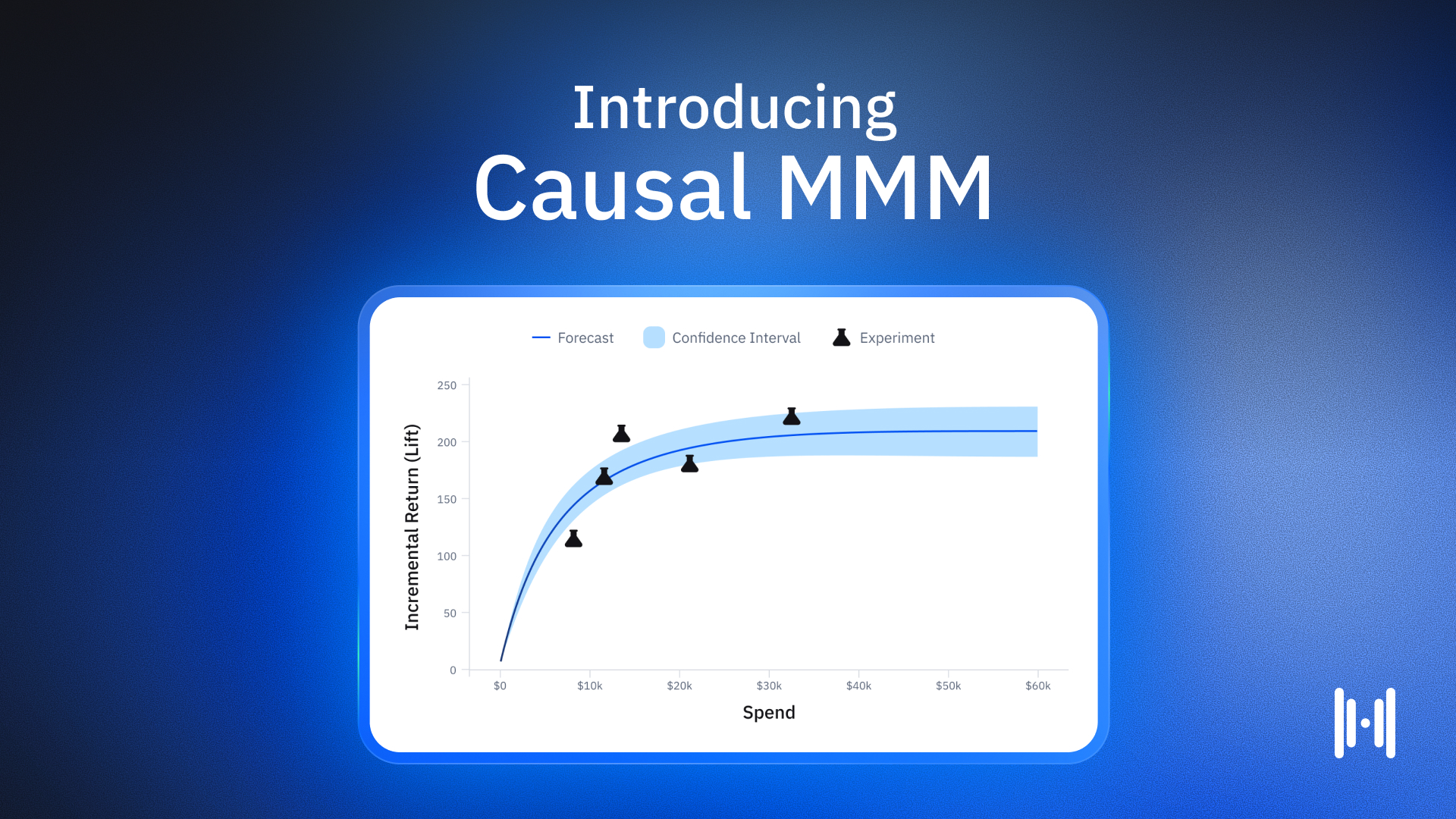

Your optimal incrementality test duration is primarily a function of statistical power. Power is the probability that your test will detect a lift if one actually exists. To achieve high power, you need a sufficient volume of conversions in both your treatment and control groups.

Three levers control this volume:

- Holdout Size: A 50/50 split collects data faster than a 90/10 split.

- Time: The longer you run, the more data you collect.

- Budget/Volume: High-spending accounts accumulate data faster.

If you cannot increase your budget or the size of your holdout group, time becomes your only lever. You must run the test until you have captured enough conversion events to distinguish the signal (ad impact) from the noise (random background variance).

“The longer you run that holdout, the tighter and tighter the confidence band is gonna be on the result,” says Tarek. But of course, you can’t run the test indefinitely; that comes with opportunity costs. So Tarek advises teams to next ask where in the funnel this media is.

How does test duration vary by channel?

Different channels operate at different velocities, which changes how long you need to wait for results. Below are working baselines the Haus team uses as a starting point by channel. You should still adjust around your own consideration cycle and power targets, but these ranges reflect thousands of experiments across the platform.

What’s the ideal test duration for lower-funnel channels (e.g. search and retargeting)?

Channels that capture existing demand, such as branded search or bottom‑funnel social, tend to resolve quickly. Users are already close to purchase, so the gap between ad exposure and conversion is short.

In our branded search meta-analysis, the median test ran for 14 days with no post‑treatment window (PTW), and only 27% of tests added a PTW at all. When a PTW was included, it increased DTC lift by less than 10% on average, confirming that most of branded search’s value shows up during the active test window itself.

That’s why Tarek’s team is comfortable treating 2 or 3 weeks as a typical full duration (active + PTW) for true demand‑capture tactics in most businesses.

What’s the ideal test duration for Meta Conversion campaigns?

Tarek advises a slightly longer duration (active + PTW) for Meta Conversion campaigns at 3 to 4 weeks. That’s because social platforms sit higher in the funnel. Users are discovering products, not actively searching for them, which stretches the time between impression and conversion.

This advice beared out our Meta Report, where we found that the average experiment ran 18.6 active days plus an 8.8‑day PTW, for a total of 27.4 days.

What’s the ideal test duration for Meta Traffic, Reach, and Awareness campaigns?

In our Meta Report, we found that upper‑funnel Meta tests (Traffic, Reach, Awareness) ran even longer, averaging 34.4 days to capture the full impact of awareness‑driving campaigns. This builds in a bit more time for the platform to stabilize delivery. Plus, it allows time for post‑treatment effects to show up, which become more important as you move up‑funnel.

What’s the ideal test duration for Pinterest and Snap campaigns?

Tarek’s advice is 4-5 weeks for an incrementality test of this media. This length is especially important for prospecting and driving mid-funnel behavior.

What’s the ideal test duration for upper-funnel formats like YouTube and TV?

Video formats typically require the longest duration. A YouTube or CTV impression might happen weeks before the purchase, so short attribution windows dramatically understate impact.

In our guide to YouTube incrementality testing, we recommend 3–4 weeks of active testing followed by a 2‑week PTW, for a total of ~5–6 weeks. Across YouTube experiments on Haus, including that PTW increased incremental ROAS by 79% on average — almost doubling the apparent impact relative to in‑flight results alone.

Tarek extends the same logic across upper‑funnel video and brand campaigns more broadly:

- YouTube and TikTok video: 4-6 weeks total (treatment + PTW)

- Meta reach campaigns: 6-8 weeks total

- CTV / OTT: 4–6+ weeks total, often with a longer PTW for high‑AOV or retail‑heavy businesses

True brand campaigns (multi‑channel brand building): 2–6 months total duration, often in a smaller “playpen” of geos so you can keep other tests running elsewhere

When is a post-treatment window necessary?

If you stop measuring the day your campaign ends, you are throwing away data.

In incrementality testing, the post-treatment window, also known as a cooldown or observation window, is the period after the active test where you stop spending but continue measuring. By adding a PTW, you turn a standard holdout experiment into a longitudinal study. Aggregated data consistently shows the value of this phase.

These longer windows become especially important around promotional periods. In our Cyber Week incrementality report, we found that during BFCM:

- Around 41% of incremental value for some campaigns appeared in the post‑treatment window

- Delayed effects were nearly 3x larger than in evergreen PTWs

- CTV in particular showed a median 344% improvement in efficiency when the PTW was included

CTV and other view-based channels are designed to create net‑new demand, not just capture it, building in a meaningful PTW is non‑negotiable if you want to see their real impact.

Across Haus customers, Measurement Strategist Tarek Benchouia has seen brands steadily push toward longer tests as they mature their programs. The reason is simple: longer tests both tighten confidence intervals and let you see how incrementality evolves across different time periods (e.g., pre‑promo vs. promo vs. post‑promo), instead of giving you a single, narrow snapshot.

How does consideration cycle affect how long you run an incrementality test?

Beyond statistical power, your incrementality test duration must respect your business reality. A test cannot force a customer to buy faster than they naturally do.

If you are selling enterprise software with a 90-day sales cycle, a two-week test will return a “zero lift” result more often than not. This isn’t because the ads aren’t working; it’s because the test concluded before the harvest began.

Verticals differ significantly in how they accumulate lift. Subscription apps and digital services often benefit from faster feedback loops. For a fitness app measuring the impact of a New Year’s campaign, the conversion — an app install and trial start — happens within hours or days of the ad impression. In these cases, 7–10 day tests can often be viable if transaction volume is high enough.

Contrast this with a furniture retailer. A customer might click an ad for a new sofa, visit the showroom three days later, measure their living room the next weekend, and finally purchase online two weeks after the initial click. A 7-day test here would register zero revenue for that user, completely missing the campaign’s contribution.

For health and wellness brands, where consideration involves research and consultation, testing frameworks often require months rather than weeks. Guidelines for these verticals suggest observation periods that extend 4 to 8 weeks beyond campaign exposure.

Conversely, for low-AOV impulse purchases or food delivery, running an 8-week test is wasteful. You likely captured the necessary signal in the first 14 days, and continuing the test only increases the risk of contamination from external factors.

What are the risks of incorrect incrementality test duration?

Getting the timeline wrong introduces specific risks that can invalidate your investment in testing.

The risk of stopping too early is false negatives. You might conclude a channel is unprofitable simply because the conversions hadn’t matured yet. This error leads brands to pull budget from high-performing upper-funnel channels because they look inefficient in short windows.

The risk of running too long is contamination. The longer a test runs, the more likely an external event will break your control group. A competitor launches a massive promo, a snowstorm hits the East Coast, or your own team launches a site-wide sale. These variables affect treatment and control groups differently, muddying the causal link.

There is also the issue of cookie decay in user-level tests. Cookie decay is less of an issue for geo-based testing, which relies on stable geographic definitions rather than fragile browser tracking. However, over a long user-level test (e.g., 60 days), the ability to track the control group degrades as cookies expire or users switch devices.

How do you work correct test timing into your experiment design process?

Determining your test length should be part of the design phase, not a decision made mid-flight.

Start by analyzing your historical time-to-conversion metrics. If 90% of your conversions happen within 7 days of a click, a 14-day test with a 7-day PTW might be sufficient. If your curve is flatter, you need to budget more time.

Also, consider the holdout size. If you are nervous about opportunity cost and only want to hold out 5% of your audience, be prepared for a longer test. If you are willing to run a 50/50 split, you can achieve statistical significance much faster.

Finally, communicate these timelines to finance and leadership stakeholders early. When they understand that the “extra” weeks are required to capture the full ROI, fundamentally making the numbers look better, they are usually willing to wait.

If you’re working with a measurement platform that has a robust service component, like Haus, you won’t need to worry about carefully calculating the test duration yourself; a dedicated Measurement Strategist will work with you to get the timing right based on their experiences with similar brands and previously successful testing roadmaps.

What are the benefits of correctly timing your incrementality tests?

The “right” duration for an incrementality test balances statistical necessity with operational reality. It is long enough to capture the full purchase cycle and achieve significance, but short enough to minimize contamination and opportunity costs.

Most brands eventually move away from ad-hoc duration guessing toward a standardized testing cadence.

By understanding the interplay between active runtime and the post-treatment window, you can build a measurement practice that provides confidence without slowing down your marketing operations. Haus helps teams design these parameters precisely, using historical data to calculate the exact power and opportunity cost before a test ever launches, so you can trust the results that follow.

FAQs about incrementality test duration

What is the minimum duration for an incrementality test?

Most platforms and experts recommend a minimum of 14 days for incrementality tests. A 14-day window accounts for weekly cyclicality (people shop differently on Mondays vs. Saturdays) and basic conversion lag. Tests shorter than two weeks often produce noisy, unreliable data that underreports true lift.

Does a post-treatment window count toward test duration?

Yes, the total duration of your experiment includes both the active ad delivery period and the post-treatment window. While you aren’t spending media dollars during the PTW, you must wait for this period to close before analyzing the final results. Ignoring this window often leads to underestimating the channel’s value.

Can I stop a test early if I see significant results?

It is generally risky to stop a test early, even if initial results look promising ("peeking"). Early wins can be false positives driven by short-term variance. It is better to stick to the pre-calculated duration required to achieve statistical power, enabling valid and repeatable results.

How does seasonality affect test duration?

Strong seasonality can force you to run shorter tests to avoid overlapping with major peaks (like Black Friday), where control groups might behave unpredictably. Alternatively, if you must test during a volatile period, you may need to extend the duration or increase the holdout size to separate the ad signal from the strong background noise of seasonal shopping.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.png)

.avif)

.png)

.avif)