For decades, MMM was the gold standard for big brands with steady budgets and plenty of patience, but those early models were slow, expensive, and often shrouded in mystery. Reports took months to produce, relied heavily on expert consultants, and were often out of date by the time they reached decision-makers.

This article covers what's actually changing: the technical shifts, the common failure modes, and what a modern implementation looks like in practice.

The shift from identity to aggregation

In-platform reporting and click-based attribution are two ways marketers often measure effectiveness today. But reported conversions are often double-counted across platforms, and a click-driven methodology misrepresents channel contribution. It doesn't account for the impact of an ad someone viewed and didn't click, even if they converted because of it.

MMM sidesteps these problems by operating at the aggregate level. Rather than tracking individuals across touchpoints, it looks at relationships between spend and outcomes over time, across channels. Because it doesn't depend on user-level tracking, MMM remains compliant with privacy regulations and cookie deprecation, offering a durable measurement framework for brands seeking long-term insights.

From correlation to causal calibration

Traditional MMM is built on correlational data, not causal relationships. While it can help explain what happened and identify trends, it doesn't explain what specific tactics caused specific outcomes.

This is the core critique of legacy approaches, and it's well-founded. A recent BCG report found that 68% of companies don't consistently act on MMM results when allocating budget. The trust problem is real, and it flows directly from the data quality problem.

MMM data suffers from two structural issues: statistical noise and multicollinearity. When all channels are moving up and down at the same time, as they typically do during peak season or major campaigns, a model may struggle to understand which channel is leading to a change in KPIs. Feed this scenario into two different MMMs and you might get two opposite recommendations on where to invest your budget.

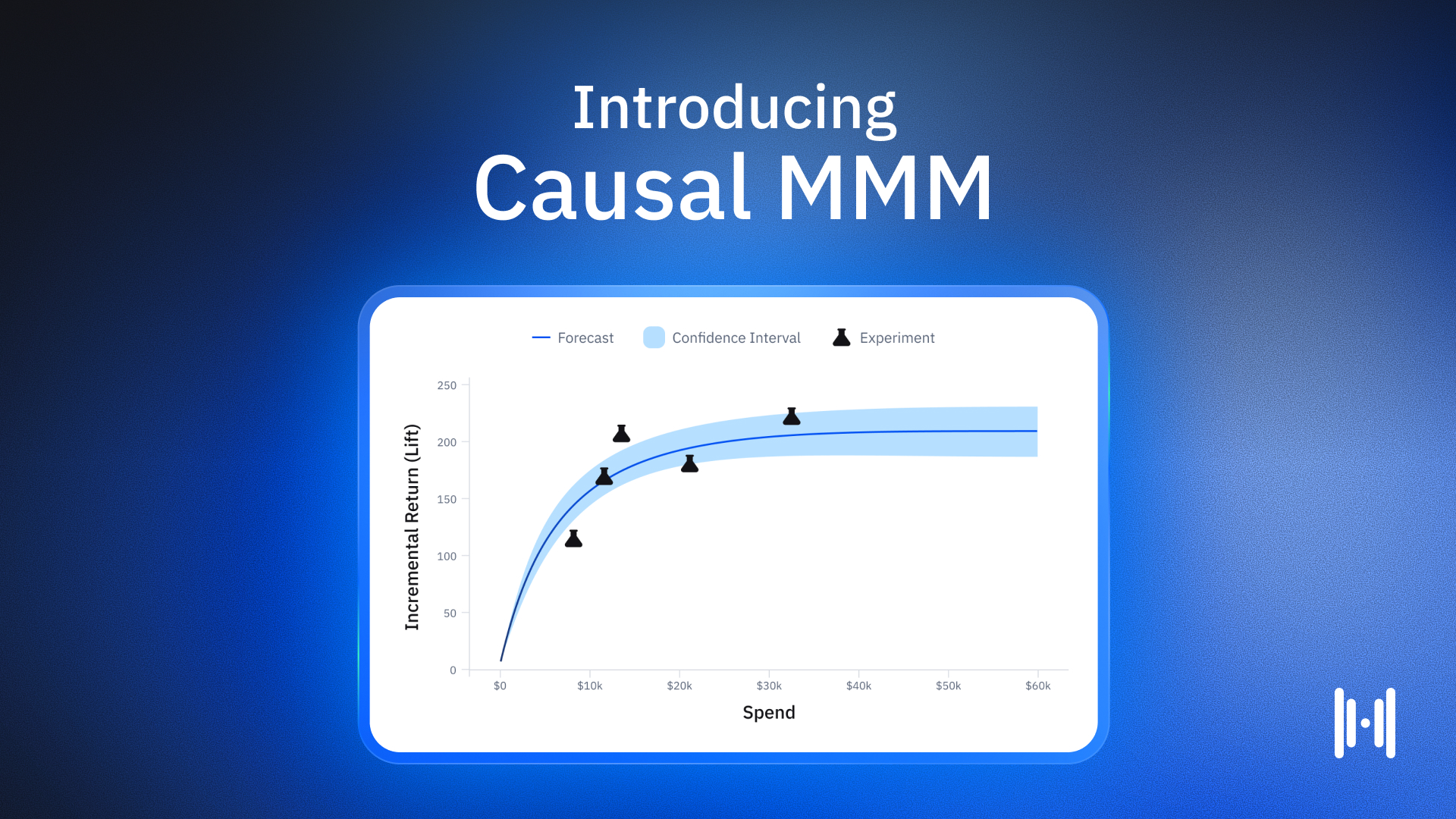

The emerging solution is causal calibration: grounding the model in experimental data rather than relying purely on observational patterns.

How calibration works

The concept of "identification" is central here. Identification is about teasing out an actual, real causal relationship between tactics and outcomes, going beyond looking for patterns and trends and allowing you to confidently say, "That is the actual effect." It's particularly important in MMM because when you can clearly identify the impact of one channel, it becomes easier to accurately measure the impact of the others.

The standard Bayesian approach treats experiments as suggestions, nudging the model toward experimental results while letting observational data still dominate. This gets closer to ground truth, but doesn't fully identify the return curve. A frequentist approach narrows the field to models that are more in line with experiments, but still leaves hundreds of plausible parameter sets.

The more rigorous approach uses experiments as the starting point, not a correction. Rather than using experiments as priors to improve MMMs, which is the right direction but not far enough, a proprietary algorithm can treat them as ground truth in the model: starting with experiments, then letting observational data fill in the gaps.

Once a channel's causal impact is anchored to experimental results, there is less variance left over in the KPI for the model to explain, making it easier to identify the impact of other channels too. Each new anchor point of identification reduces uncertainty: less noise, less confounding, and better overall identification, even for channels that haven't been tested yet.

Granularity and speed replacing static reports

One of the practical complaints about traditional MMM has always been the lag. Building and maintaining a model often requires a dedicated team of 8 to 10 or more specialists. Businesses often spend 3 to 6 months just hiring and mobilizing that team, with another 12 to 24 months iterating to optimize practices and processes.

Modern approaches have compressed this dramatically. A common misconception is that MMM refreshes take a long time. Modern Causal MMM can refresh every week, allowing teams to take an iterative, dynamic approach to budgeting and strategy.

Geo-level hierarchy

The other granularity problem is channel-level aggregation. Media planning is often granular but MMM results often aren't. If a model outputs total Meta spend, it doesn't map to how media is actually bought: Meta prospecting campaigns, Meta retargeting, and so on. Bridging the gap between planning and measurement takes significant work when the results aren't aligned with how a team actually plans.

Modern MMMs built on geo-level experimental data have a structural advantage here because the underlying experiments already operate at the market level, allowing the model to reflect geographic variation in channel performance rather than collapsing everything to a national average.

Integrating reach, frequency, and search demand

One of the ways better models differ from legacy ones is in how they handle inputs that aren't straightforward spend figures.

Reach and frequency

Channels like linear TV or connected TV are typically bought on reach and frequency, not click-based outcomes. A model that only ingests dollar figures misses the underlying delivery mechanism. Modern approaches incorporate reach and frequency data directly, which allows the saturation curves for these channels to reflect actual exposure rather than just spend.

Controlling for organic demand

During peak season, organic demand is up, KPIs are up, and ad spend is also up. Everything is going up at the same time. When you feed that into a model, you're seeing the pattern that everything is going up, but you don't know which tactics are causing which outcomes.

Controlling for organic demand, through branded search volume, category interest indices, or similar proxies, is one of the more consequential choices in model design. Models that don't account for this will systematically overattribute revenue to paid channels during periods of high organic intent.

Common pitfalls in modern modeling

The organic demand trap

Ad platforms like Meta and Google are good at finding people who are already likely to convert. Their algorithms are optimized to deliver ads to the right people at the right moment. However, this doesn't mean the ad caused the purchase. It was simply present in the face of existing intent. A model that doesn't control for organic demand will assign those conversions to the paid channel, inflating its measured contribution and directing budget toward spend that isn't actually moving behavior.

Ignoring geo heterogeneity

Markets behave differently. A national-level model averages those dynamics away, which can produce budget recommendations that are wrong for most individual markets. Models that incorporate geo-level variation, both in the experimental calibration and in the ongoing observational data, are better equipped to surface these differences and inform more targeted allocation decisions.

The open source ecosystem

A wave of open-source frameworks like Robyn and LightweightMMM has democratized access to MMM. These allow internal teams to build their own models with flexibility and transparency, but they often require significant technical expertise to implement and maintain. They're best suited for brands with an in-house data science team and expert knowledge, though they're unlikely to be a best-in-class solution at scale.

The open-source movement has also made modeling assumptions more visible. When a vendor builds a black-box model, the priors, the assumptions about how channels behave, are hidden. If a vendor didn't ask you about priors, they were quietly making assumptions about your business behind the scenes. Open-source tools force that conversation into the open, which has raised the standard for what transparency looks like across the category.

Implementing a modern measurement stack

The measurement data contract

Before any model can run, someone has to define what data goes in and at what frequency. The core requirements: channel-level spend data broken out to the campaign or line-item level where possible, business KPIs at a consistent daily or weekly grain, and external factor controls for promotions, product launches, and seasonality.

A model trained on inconsistent or incomplete data will produce estimates with wide uncertainty bands, and wide uncertainty bands produce recommendations that are hard to act on.

The experimentation roadmap

The more experiments you have to draw from, the more powerfully informed your MMM will be. A strong experimental roadmap is key to getting started with Causal MMM.

The practical question is sequencing. Start with the channels where incrementality is most uncertain or where spend is highest. Continuously testing testable channels can improve the model's estimates for untestable channels, because untestable channels are estimated relative to everything else. Once testable channels are grounded in experiments, the model can better identify how the remaining channels explain the leftover variation in the data.

The modern MMM operating model

Roles and responsibilities

A Causal MMM requires clarity on who owns what. The measurement team, whether in-house or a vendor, owns model configuration, experiment design, and output interpretation. The media team translates model outputs into actual budget decisions. Finance needs enough visibility into the methodology to trust the outputs and incorporate them into planning cycles.

What makes Causal MMM more legible to finance teams is that it isn't a black box. They can see the origin of the recommendations, which they can actually trust. The response curves come from experiments the team already understands, providing ongoing visual validation.

Planning cadence

With weekly model refreshes, the planning cadence can shift from quarterly recalibration to a continuous loop. The model updates as new data comes in, experiments that complete get incorporated, and budget decisions get made against a current picture rather than a months-old one.

Marketing mix modeling FAQs

What is the difference between MMM and multi-touch attribution (MTA)?

MMM uses historical aggregate data, while MTA tracks user-level digital behavior across marketing touchpoints. MTA assigns "credit" to different marketing tactics, while MMM calculates holistically how the media mix contributes to sales. MTA is increasingly constrained by privacy regulations and cookie deprecation. MMM is not, because it never relied on individual-level tracking.

How often should I refresh my marketing mix model?

Companies often update their MMM every few months, but this isn't always enough to reflect new pricing, new products, or new marketing conditions. Modern Causal MMM can refresh weekly, enabling a dynamic, iterative approach to budgeting and strategy.

What is "calibrated" or "causal" MMM?

A calibrated MMM incorporates data from incrementality experiments to anchor the model's channel-level return curves to real causal effects rather than correlations. A causal MMM goes further, treating experimental results as ground truth rather than as a nudge or prior weight. When a channel's impact is anchored this way, the model is identified, meaning it has found a true cause-and-effect relationship, not just a pattern in the data.

Does MMM require a data scientist to implement?

Traditional MMM implementations typically required teams of 8 to 10 specialists and multi-year ramp times. Open-source frameworks have democratized access and allow internal teams to build models with flexibility and transparency, but they still require significant technical expertise to implement and maintain. Modern SaaS platforms have reduced that burden considerably.

Can MMM measure offline channels like TV and direct mail?

Yes. This is one of MMM's structural advantages over click-based attribution. An MMM analyzes historical spend and outcomes across all channels, including TV, radio, out-of-home, and direct mail, teasing apart the real contribution of each. Offline channels can also be incorporated into the experimental calibration layer: geo holdout tests on linear TV can produce causal estimates that anchor the model's TV response curve in the same way digital experiments do.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.png)

.avif)

.png)

.avif)